Introducing an ‘ML maturity ladder’ for biologics discovery and development

Many organizations confine machine learning in molecular discovery and design to isolated proof-of-concept projects. We propose a 5-level maturity ladder to illustrate how teams advance towards autonomous systems that learn from every experiment and guide discovery across entire pipelines.

Stef van Grieken · 15 min read

Machine learning (ML) is reshaping how biologics are engineered, accelerating discovery cycles, enabling smarter decisions, and opening new paths through an unimaginably vast design space. But the pace and breadth of change can feel overwhelming. What does “good” practice even look like for a discovery and development organization today? What might it look like tomorrow?

The promise of ML in biologics R&D is to deliver safe, effective therapeutics in less time, but achieving that potential will require clarity on how maturity is defined and measured.

In our work with leading pharmaceutical and industrial biotech organizations, we’ve seen a clear need for a shared language to describe ML maturity, that helps teams and executives assess where they stand and how they can advance.

So we’re proposing a straightforward “maturity ladder” for biologics discovery and development. Inspired by the self-driving car industry’s SAE levels – which range from purely manual driving to full self-driving capability – our ladder charts progress as a climb toward greater autonomy, enabled by smarter sequence generation and faster learning from increasingly automated experimental cycles.

Our aim is to provide a simple reference that leaders can use to benchmark progress, align strategy, and guide investment. More importantly, we hope it sparks discussion across the R&D community about how the field can mature together.

The ML Maturity Ladder

From traditional to autonomous biologics R&D

Just as the automotive industry defines levels of self-driving capability, from manual control to high automation, biologics R&D is seeing its own progression in how machine learning contributes to discovery.

Level 0

No Machine Learning Used

Akin to SAE Level 0: full manual control, where the human does all the driving and the system offers no automation.

Traditional protein engineering that relies on semi-rational or rational approaches. This might involve introducing mutations, observing their effects, and combining beneficial changes, or applying expert knowledge and bioinformatics to guide where and how to make changes to sequences.

Level 1

Human Assessment

Akin to SAE Level 1: driver-assist features like lane-keeping or cruise control – the system provides guidance, but the human remains fully responsible for every action.

Tools such as AlphaFold are used to help scientists understand the likely structure or properties of a protein, but decisions about what to design or test still rest entirely with humans.

Level 2

ML Ranking and Selection

Akin to SAE Level 2: partial automation, where the system can control both speed and steering under human supervision – ML handles defined tasks while humans oversee and decide what to test next.

Individual computational scientists begin developing models. ML models play a more active role, predicting how well different known candidate proteins might perform on single properties, such as binding affinity, aggregation or immunogenicity. Each sequence is given a predicted-performance score for ranking, which scientists use to build heuristics for selecting the most promising candidates to test in the lab.

Level 3

ML Generation and Learning

Akin to SAE Level 3: conditional automation – the system can drive itself under limited conditions but still expects the human to monitor and take over when needed.

At this stage, organizations either begin developing their own enterprise-grade ML platforms or adopt existing ones to support scalable discovery workflows. ML models go beyond evaluating existing sequences, generating entirely new candidates for ranking and testing. This can be done without project-specific training data (i.e. zero-shot) or using prior assay results from hit-identification or lead optimization.

At the top of this level, the ML models consider multiple goals at once, such as binding strength, stability, toxicity, and manufacturability, and down-select sequences that balance those trade-offs. The software becomes part of an active learning system: it proposes new designs, selects the most promising set of candidates for testing, receives real-world experimental feedback, and learns from the results, closing the loop between ML systems and the wet lab.

Beyond the learning loop for individual programs, deeper organizational embedding of ML systems bring learning and accelerated progress across programs and departments as programs leverage each other's data. Scientists still decide what experiments to run, which programs to continue, and what additional assays to develop.

Level 4

AI Agents and Decision Making

Akin to SAE Levels 4 and 5: largely autonomous operation within defined limits, with optional human oversight.

At the final level the software goes beyond designing sequence libraries and deciding on experimental setup, taking on a more agentic role, meaning it can act on its own analyses to make defined decisions. This can include choosing which programs to advance based on target product profiles, allocating experimental bandwidth, or refining assays to improve understanding of the target. The level of human-in-the-loop can vary by organization and program, but at this stage, required coordination is minimal and you have a fully integrated and automated system for engineering biomolecules.

What it really takes to ladder up

For autonomous vehicles, the highest level of maturity is a car that can drive itself anywhere, in any conditions, without human intervention. In biologics, we see the equivalent as a discovery system that can design new proteins, select the best candidates, trigger lab experiments, learn from the results, and continually improve with minimal human coordination. At that point, machine learning becomes more than a tool. It becomes a strategic guide for scientific discovery.

Reaching that level of autonomy depends not only on building smarter models, but on how effectively teams connect them to experimental science and structure their organizations around those feedback loops. In a future companion piece, we’ll explore the three foundational strands that make this climb possible – scope of ML application, data practices, and organizational integration – and show how the real race to ladder up in biologics is to advance all of them together.

Climbing the Ladder

A Practical Framework for ML Maturity in Biologics Discovery

Just as the automotive industry defines levels of self-driving capability, from manual control to high automation, biologics R&D is seeing its own progression in how machine learning contributes to discovery.

The path from isolated proof of concept to sustained impact is not just about better models. It depends on tight alignment between ML and the underlying science, and on building an organisation that can sustain a continuous learning loop. In practice, that work rests on three strands that must grow together: scope of ML application, data practices and organisational integration.

This handbook explores those strands. It shows what strong foundations look like in leading R&D organisations and highlights clear signals you can use to judge where you stand – supported by an interactive self-assessment tool later in the guide. The aim is to help you identify the most important next steps as you climb the ladder of ML maturity. Let’s dive in.

The Three Strands of ML Maturity

Progress toward autonomous biologics discovery depends on simultaneously developing three interwoven strands:

Scope of ML Application

How broadly and deeply are ML tools used across your discovery pipeline? Moving beyond isolated pilots, mature teams extend ML across modalities and phases, turning it into a unifying driver of design and decision-making

Experimental Data Practices

Are your experiments setting up your ML systems for success? Mature data practices tighten assay quality, speed up feedback, and ensure every experiment strengthens both candidate selection and the ML models behind it.

Organizational Integration

How embedded is ML in your workflows, culture, and decision-making? Higher maturity comes when ML sits at the heart of shared, reliable workflows that scientists can use independently and that guide day-to-day decisions.

Now let's break down each strand into its key components.

Scope of ML application

How broadly and deeply are ML tools used across your discovery pipeline?

In many organisations, ML never escapes the corner of the lab. It stays confined to narrow tasks or isolated proof-of-concept projects. Maturing the scope of ML application means expanding across three critical sub-strands: the biological modalities you work with, the phases of your R&D pipeline, and the properties of biologics candidates that you optimize for simultaneously. We’ll take each in turn.

Modalities

From Simple to Complex Biologics

What It Means

ML maturity in modalities reflects your ability to apply machine learning across increasingly complex biologic formats. Most organizations naturally start at the simpler end of the modality spectrum before addressing the more challenging, and potentially more rewarding, molecular architectures.

Why It Matters

Complex modalities such as multispecific antibodies and antibody–drug conjugates (ADCs) represent some of the most technically challenging and potentially high-impact therapeutics. Organizations that can apply ML into these domains will unlock a greater drug possibility space and accelerate the development of next-generation biologics.

The Maturity Spectrum

Low Maturity:

ML applied to a single, simple modality – usually peptides or single-chain fragments.

Every new modality requires starting from scratch, with little reuse of past work.

Minimal data sharing or transfer of learning between modality types.

Each modality relies on just one primary assay or data type.

High Maturity:

ML applied across a wide range of modalities, from peptides and VHHs to full IgGs, multispecifics, and ADCs.

Transfer learning allows progress in one modality to accelerate work in others.

A unified platform supports multiple biologics formats within the same workflow.

Insights flow across modalities, shaping stronger design strategies.

Real-World Excellence

AbbVie: Protein Language Models for Bispecific Engineering

Polyreactivity is a critical developability issue that causes antibodies to stick to unintended targets, disrupt assays, and trigger off-target effects that derail development. AbbVie used a Protein Language Model (PLM) approach to predict polyreactivity. The model can handle diverse antibody formats; it was validated across standard mAbs, VHH-Fc, as well as 12 distinct subtypes of bispecific antibodies.

Source: Nature PMC

Sanofi: Custom AI for mRNA Therapeutics

Sanofi has built CodonBERT, a large language model for designing mRNA therapeutics, trained on a dataset of 10 million mRNA sequences. Unlike traditional protein models, CodonBERT understands how codon choice affects not just the resulting protein but also RNA stability, immunogenicity, and translational efficiency. The model has cut mRNA construct design time in half.

Sanofi has also developed RiboNN, a deep convolutional neural network that predicts translation efficiency with twice the accuracy of previous models. The model was trained using RiboBase, which contains more than 10,000 ribosomal profiling experiments.

Source: Sanofi Digital & AI

Phases

Connecting Your R&D Pipeline

What It Means

ML maturity reflects how well your systems link the phases of drug discovery and development, allowing insights from hit ID, lead optimization, and developability assessments to inform each other. It’s about creating a pipeline where models don’t just operate in isolated steps, but continuously build on what the science learns at every stage.

Why It Matters

Drug development is inherently sequential, but the insights aren't. When ML systems can learn from how early-stage predictions correlate with later-stage outcomes, models become dramatically more effective at identifying candidates that will succeed downstream. This prevents the common problem of optimizing for early metrics that ultimately don't translate to clinical success.

The Maturity Spectrum

Low Maturity:

ML used in only one stage of development, often limited to early discovery.

Little or no data connectivity between phases.

Each phase builds its ML work from scratch.

Phase-specific ML models do not speak to each other.

High Maturity:

ML connected across all major program phases (i.e. hit ID, lead optimization, and developability).

Longitudinal learning. For example, insights from hit ID screening inform lead-optimization strategies.

Models continuously improve by learning from downstream experimental results.

A unified data architecture supports learning across the entire pipeline.

Real-World Excellence

Bristol Myers Squibb: "Predict First" Before Getting Physical

Bristol Myers Squibb has institutionalized a "predict first" strategy that fundamentally changes how it does discovery. As of 2025, all of its small molecule programs use proprietary AI/ML to evaluate efficacy and liabilities before synthesis—up from just 5% in 2021. About half of its large molecule programs are taking the same approach. Mike Ellis, SVP of Discovery & Development Sciences, says BMS doesn’t “go into the lab and make things unless we have a good idea of what the forecast looks like.” And in BMS’s CELMoD agents program for sickle cell disease, computational models enabled researchers to move beyond a developmental "plateau" they had previously reached, taking the program in new directions that integrated all target properties into a single clinical candidate.

Source: PharmaVoice

Pfizer: Accelerating Clinical Trials and Manufacturing

Pfizer demonstrates phase-maturity in later R&D stages with their Smart Data Query (SDQ) tool, developed in just six weeks with Saama Technologies. SDQ automated clinical trial data cleaning, reducing the time from final data collection to review-ready datasets to just 22 hours—a process that typically takes weeks. "It saved us an entire month" on COVID-19 vaccine development, says Demetris Zambas, Pfizer VP and Global Head Data Monitoring and Management. Now used in over half of Pfizer's trials, the tool exemplifies how late-phase ML excellence creates high-quality clinical data that feeds back to improve early-stage predictive models—a virtuous cycle connecting the entire pipeline.

Source: Pfizer News

"We don't go into the lab and make things unless we have a good idea of what the forecast looks like."

Properties

Multi-Objective Optimization

What It Means

ML maturity in property optimization reflects the ability to evaluate and balance multiple design requirements for each candidate molecule, managing the trade-offs rather than optimizing single properties in isolation.

Why It Matters

Therapeutic proteins must meet many requirements at the same time. A potent antibody that aggregates in manufacturing or triggers unwanted immune responses is worthless. Multi-property optimization reveals these trade-offs early, helping teams avoid late-stage failures, shortening development timelines.

The Maturity Spectrum

Low Maturity:

ML predicts or optimizes one molecular property at a time.

Properties are improved sequentially – first binding, then stability, and so on.

Scientists manually balance competing properties.

No explicit modeling of trade-offs between properties.

High Maturity:

ML evaluates binding, stability, immunogenicity, manufacturability, and other key requirements together.

Models map out the best achievable trade-offs between these properties.

ML captures how improvements in one property affect others.

Candidate designs are generated to balance multiple objectives from the outset.

Real-World Excellence

Amgen: Sequence-Based Viscosity Prediction

Amgen tackled one of the most challenging developability problems in biologics: high viscosity in concentrated antibody formulations. They developed a sequence-based ML model trained on 83 carefully curated antibodies, achieving more than 80% accuracy in classifying sequences as high or low viscosity. Applied to a problematic monoclonal antibody (anti-IL-13 mAb), the model screened in silico-designed variants to prioritize candidates for lab testing. From just 16 synthesized variants, they reduced viscosity at 150 mg/mL by 60%, from an unworkable 34 cP to a clinically viable 13 cP. The work demonstrates how ML-powered property prediction directly solves high-value engineering challenges.

Source: MDPI Biomolecules

Novartis: SUMO – In Silico Sequence Assessment Using Multiple Optimization Parameters

The SUMO workflow was developed at Novartis as an automated in silico screen to assess the early developability of antibody and VHH sequences. For each sequence, it builds homology models of the variable regions and calculates a wide set of physico-chemical and structural features, including aggregation risk, charge properties, hydrophobic patches, predicted chemical liabilities, solubility, and MHC-II binding–based immunogenicity

These readouts are combined into dashboards and composite risk flags, allowing teams to rank and cluster thousands of hits by their overall developability profile, not just affinity. And because SUMO supports multi-parameter optimization, it is helping scientists redesign sequences to reduce specific liabilities – such as aggregation hot spots or deamidation risk – while keeping other properties in balance.

In practice, this lets Novartis select leads that sit in stronger positions on a multi-objective developability landscape, rather than picking the tightest binder and hoping downstream engineering will fix issues. SUMO is a clear example of a mature, computation-driven approach to multi-property optimization.

Source: PubMed

Experimental Data Practices

Are your experiments setting up your ML systems for success?

No matter how advanced your models, an ML system can only be as strong as the experimental evidence it learns from. While fields like image recognition are powered by internet-scale datasets, good experimental biology data is sparse, noisy, inconsistent, and expensive.

That’s why mature data practices focus on three connected elements – quantity, quality, and workflow. We’ll take them in order.

Quantity

Scale and Throughput

What It Means

Data quantity maturity reflects how many sequences your wet lab can test in each design–build–test cycle and how fast those results feed back into the ML system. It’s about the combined capacity and tempo of your experimental workflow.

Why It Matters

ML models learn best from large, diverse datasets. Without sufficient quantity, models risk going "out of domain" when generating novel proteins, which means poor predictions. High throughput also enables active learning—a virtuous cycle in which models propose designs, receive rapid experimental feedback, and improve with each iteration. Speed matters because it allows more learning cycles within a project timeline.

The Maturity Spectrum

Low Maturity:

Small datasets (dozens of sequences).

Few experimental properties measured.

Manual, low-throughput assays.

Long turnaround between ML design and experimental results (weeks to months)

Single-plate experiments.

High Maturity:

High-throughput screening as standard, using 96 or 384-well plates.

Large, diverse sequence libraries per cycle (hundreds to thousands).

Fast feedback loops (days rather than weeks).

Multiple properties measured in the same cycle.

Systematic exploration of the design space.

Real-World Excellence

Sanofi: Industrial-Scale Automated Platform

Pioneering high-throughput biologics engineering, Sanofi has engineered an end-to-end automated platform. It links in silico design, DNA cloning, compartmentalized mammalian cell expression, and multi-parameter screening, allowing teams to test more than 10,000 variants at a time.

In one optimization campaign for a CODV-Ig bispecific antibody, the platform screened over 25,000 variants, delivering a more than 1,000-fold boost in potency and major gains in production titers. It has now run more than 30 campaigns and serves as a dedicated data-generation engine for training the latest ML applications.

Source: Taylor & Francis mAbs

Genentech: Active Learning Strategy

Genentech’s Lab-in-the-Loop uses an intelligent, iterative approach to scaling antibody design. Across four targets, the system designed and tested more than 1,800 unique variants. Its core innovation is the loop itself: experimental results from each round are fed straight back into the ML models, which then design improved variants for the next cycle.

Active learning algorithms pre-screen thousands of in silico designs and select those deemed most likely to teach the model something new. This targeted search makes far better use of limited experiments, building more generalizable models over time compared with brute-force screening approaches.

Source: bioRxiv

High-throughput screening generates 100-1000x more training data per cycle, enabling models to learn exponentially faster

Quality

Reproducibility and Controls

What It Means

Data quality maturity reflects how reproducible, accurate, and well-controlled your experimental measurements are. It’s about giving ML systems consistent, trustworthy inputs so they can learn from genuine biological patterns, not noise.

Why It Matters

When repeated tests on the same sequence produce conflicting results, ML models can’t learn reliable patterns. It’s the classic “garbage in, garbage out” problem, only this garbage costs millions. Strong quality control at the bench directly improves model performance, which is why industry-leading teams set strict QC thresholds and exclude low-quality data from model training entirely.

The Maturity Spectrum

Low Maturity:

Poor or missing controls.

Unclear detection limits and error margins.

Inconsistent assay results between runs.

No standardization across labs or programs.

Precious raw data discarded, with only summary values retained.

High Maturity:

Standardized, validated assays with documented reproducibility.

Rigorous controls on every plate.

Clearly defined detection limits and confidence intervals.

Cross-lab standardization protocols in routine use.

Full experimental provenance preserved, with quality checks before data enters the ML pipeline.

Real-World Excellence

Roche: "FAIR Data by Design"

One way large organizations strengthen reproducibility and controls is by making data itself easier to find, use, and trust. That is the goal of the FAIR principles, which hold that data should be Findable, Accessible, Interoperable, and Reusable. FAIR data makes it far easier for ML systems to trace where a measurement came from and compare like with like.

Roche has adopted a FAIR-by-design approach in its core data infrastructure. At the center is the Roche Dataset Portal, a catalog that applies FAIR principles to give unified discovery and access across more than 20 source applications, including clinical, imaging and omics data. Alongside this, Roche has implemented a Global Data Standards Repository that defines common formats and vocabularies so that datasets produced in different parts of the company can be interpreted consistently.

Roche’s leadership emphasizes that this kind of prospective “FAIRification” takes more effort up front but “pays back multiple times” by avoiding the costly harmonization of legacy data.

Source: Pistoia Alliance FAIR Toolkit

Novartis: FAIR Data Hub for AI

Novartis has created data42, a program that aimed to bring together around 2 million patient-years of clinical data and more than 20 petabytes of R&D data into a single, “machine-learnable” platform. By standardizing how data is stored and accessed, they aimed to slash data-preparation time from weeks or months to hours, directly speeding up research. Novartis ultimately cut the program because “building an industry platform was too complex for them” just a few months before ChatGPT launched.

Source: Handelsblatt

Workflow

Automated Data Integration

What It Means

Workflow maturity reflects how data moves from experimental instruments into ML systems, and whether that process preserves fidelity while minimizing human intervention.

Why It Matters

Manual data handling introduces errors and strips away information: when scientists reduce 96-well plate results to a spreadsheet, important patterns in the raw data are lost. Automated workflows prevent transcription mistakes, preserve full experimental context, and accelerate feedback loops – the foundation for true active learning.

The Maturity Spectrum

Low Maturity:

Manual data transfer via spreadsheets and email.

Raw instrument data discarded after summarization.

Frequent human transcription errors.

No direct connection between lab instruments and ML systems.

Data silos across teams and programs.

Days or weeks to get data into the ML pipeline.

High Maturity:

Automated data capture directly from instruments.

Laboratory Information Management Systems (LIMSs) and Electronic Lab Notebooks (ELNs) integrated with ML platforms.

Full raw data and provenance preserved.

Centralized data infrastructure shared across the organization.

Built-in validation and error checking.

Real-time or near real-time data available to ML systems.

Real-World Excellence

Pfizer: TetraScience Automated Data Platform

Pfizer's collaboration with TetraScience exemplifies mature workflow automation. The TetraScience platform functions as a central data cloud that automatically ingests, centralizes, and harmonizes experimental data from across Pfizer's laboratory ecosystem—connecting scientific instruments, ELNs, and LIMSs into a unified pipeline. According to TetraScience, scientific data retrieval became 66% faster, data management costs dropped by $2.5 million, and scientists’ administrative work fell by 20,000 hours (no reference timeframe provided).

Source: TetraScience

AbbVie’s Collaborative Hub

The AbbVie R&D Convergence Hub (ARCH) is one of the largest data integration platforms in the biopharmaceutical industry. It brings together data from more than 200 internal and external sources, giving teams collaborative access to over 2 billion data points. By breaking down historical silos, ARCH creates a unified, searchable asset that supports the entire discovery pipeline. Scientists use it to aggregate disease knowledge, surface hidden patterns, run analyses for target identification and validation, and guide drug design with integrated computational models. It’s a clear example of how connected, accessible data drives value across functions.

Source: AbbVie Science

Organizational Integration

Is machine learning embedded in your day-to-day work, or trapped in silos?

Technology alone doesn’t transform discovery – organizations do. Even the most advanced ML platform is useless if scientists don’t use it, trust it, or weave it into their workflows. That’s why organizational maturity hinges on two factors: the integration of ML into core processes and access to ML across diverse teams. When both are in place, ML becomes a strategic capability rather than languishing in one-off projects and optimistic PowerPoint presentations.

Integration

Strategic Embedding

What It Means

Integration maturity reflects where ML sits in your organization's decision-making hierarchy – from isolated proof-of-concept projects to core strategic planning.

Why It Matters

When ML is relegated to the sidelines, it can't deliver transformational impact. Strategic integration means ML shapes which programs move forward, how resources are allocated, and what questions the organization asks. This is where ML shifts from making individual experiments better to making the entire discovery strategy smarter.

The Maturity Spectrum

Low Maturity:

ML limited to isolated pilots or proof-of-concept work.

Runs outside core R&D workflows.

Used for “nice-to-have” analyses, not critical path decisions.

Little or no impact on program selection or resource allocation.

Computational teams treated mainly as a service function.

High Maturity:

Executive leadership understands and champions ML capabilities.

ML integrated into core decision-making, from program advancement to portfolio prioritization.

ML considerations built into target product profiles and study designs from the start.

Cross-functional teams with embedded ML expertise on key programs.

ML capabilities actively shape which projects the organization pursues and how it executes them.

Real-World Excellence

Genentech: Prescient Design Acquisition

Genentech’s 2021 acquisition of Prescient Design is a clear example of strategic ML integration. Instead of operating the startup as a separate unit, Genentech embedded the team directly into its Research and Early Development organization. Prescient’s founders moved into senior leadership roles, signalling that ML was now a core pillar rather than a support function. The combination of Prescient’s ML capabilities with Genentech’s biology expertise and proprietary data helped accelerate their lab-in-the-loop antibody design platform. It’s a strong illustration of acquiring top-tier ML talent and integrating it effectively.

Source: Genentech

AstraZeneca: Turbine Lab-in-the-Loop Partnership

AstraZeneca’s collaboration with the AI-driven biotech Turbine for antibody–drug conjugate (ADC) discovery shows what mature external partnership looks like. It isn’t merely transactional – it’s a tightly integrated, iterative workflow. AstraZeneca provides proprietary ADC datasets; Turbine’s models analyze them and recommend specific cell lines for testing in AstraZeneca’s labs. The experimental results then feed straight back into Turbine’s models, creating a closed learning loop. This setup demands high trust, data transparency, and smooth operational integration, effectively treating the external partner as an extension of the internal R&D organization.

Source: Pharma Industrial India

Organizations with ML in strategic decisions report 2-3x higher ROI from ML investments compared to those using it only tactically

Access

Democratized Usage

What It Means

Usage maturity reflects who can meaningfully work with ML tools – whether they sit with a small group of computational specialists or are accessible to bench scientists running ML-guided experiments independently.

Why It Matters

The “ML expert as gatekeeper” model doesn’t scale. When only a handful of specialists can run analyses, ML becomes a bottleneck instead of an accelerant. Democratized access lets discovery scientists use ML in their daily decision-making, multiplying the impact of every ML investment. As one Cradle client told us: "The goal isn't to hire more ML PhDs—it's to make every scientist ML-capable."

The Maturity Spectrum

Low Maturity:

ML tools usable only by computational experts.

Wet-lab scientists must submit requests and wait for analyses.

ML team functions mainly as a consultant or service group.

Limited documentation and training for non-experts.

Custom code lives on individual laptops with fragile workflows.

ML capacity becomes a bottleneck, limiting the number of programs supported.

High Maturity:

Self-service ML tools accessible to bench scientists.

Intuitive interfaces that require minimal computational expertise.

Enterprise-grade platforms with robust, shared infrastructure.

Comprehensive training programs and ongoing support.

Scientists can design, run, and interpret ML-guided experiments independently.

ML scales across dozens of concurrent programs without becoming a bottleneck.

Real-World Excellence

Merck: GPTeal Platform

Merck has accelerated AI democratization with GPTeal, a proprietary generative AI platform – an “in-house ChatGPT” – that provides secure, self-service access to leading large language models. By early 2025a more than 50,000 employees, roughly two-thirds of the company staff, were using it every month. GPTeal allows teams across functions to automate routine cognitive work, from summarizing literature and drafting reports and emails, to generating code and refining regulatory documents. It shifts AI from a specialist R&D capability to an organization-wide productivity engine.

Source: IntuitionLabs

Sanofi: "Expert AI" vs "Snackable AI" Strategy

Sanofi has adopted a deliberate dual strategy for AI accessibility – a sign of mature deployment. It’s “Expert AI” covers the advanced models built for R&D and Manufacturing scientists, such as CodonBERT for mRNA design and SimplY for manufacturing yield analytics. “Snackable AI” refers to simple, intuitive tools for the wider workforce, including Plai, a platform used daily by 20,000 employees to provide a 360° view of company activity, and Concierge, an internal GenAI chatbot for everyday tasks. This two-tier approach reflects a sophisticated understanding that successful adoption requires both cutting-edge specialist tools and easy-to-use applications that empower the entire organization.

"Any company that thinks it can build its own enterprise software is probably kidding itself. We needed infrastructure that 'just works' so our scientists could focus on biology, not debugging code."

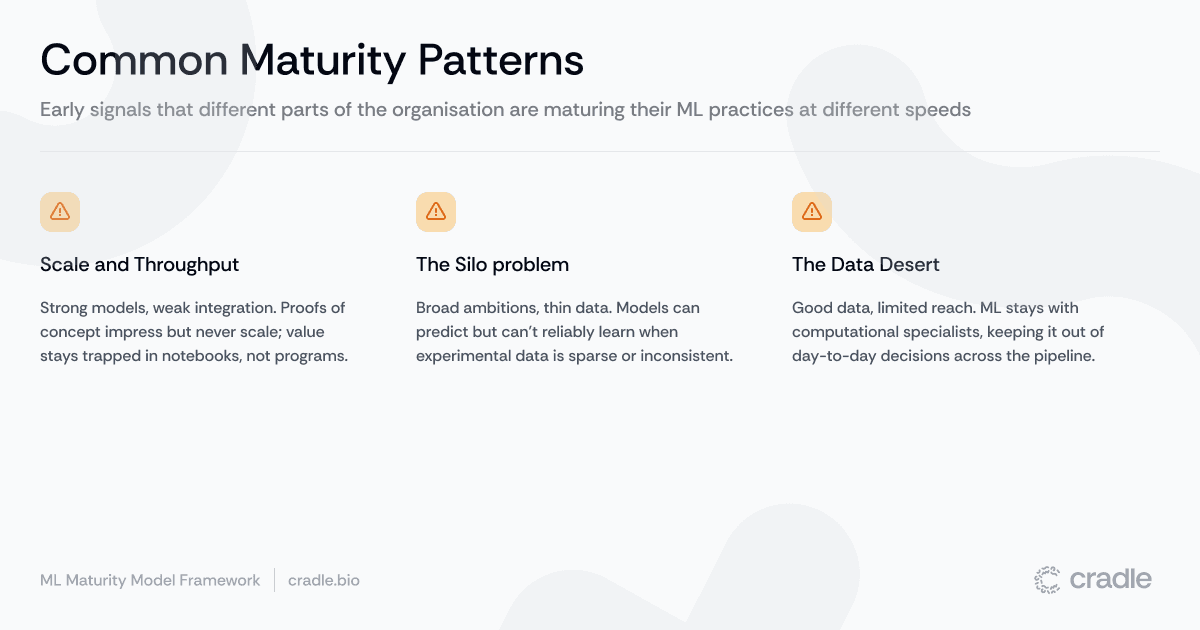

Common Maturity Patterns We See

When we map real organisations onto our ML maturity framework, we don’t see neat, diagonal lines of equal maturity across all eight substrands. Instead, we see a handful of patterns that show up again and again.

The Pilot Trap

Strong technical capabilities – sophisticated models, a solid data science team – but weak organisational integration. ML produces impressive proofs of concept that never scale beyond a handful of projects. Value stays stuck in slide decks and Jupyter notebooks rather than reaching real programs.

The Data Desert

Ambitious scope – applying ML across modalities and phases – but immature data practices. Experimental data is sparse, inconsistent or hard to reuse. Models make predictions, but they cannot reliably learn from new experiments.

The Silo Problem

Good data and capable models, but access is limited to computational experts. Bench scientists depend on a small group of specialists to run analyses, so ML impact stays confined to a few teams rather than becoming part of day-to-day decision-making across the pipeline.

These patterns are not failure modes but early signals that different parts of the organisation are maturing their ML practices at different speeds. The next step is to quantify where you are today and where your bottlenecks lie.

Assess Your ML Maturity

The three strands and eight substrands together give you a practical framework for assessing ML maturity in biologics discovery. No organisation excels in every area – it’s still early days. What matters is knowing where you are strong, where you lag, and what to do about it.

Our simple matrix helps you:

Identify your current position across each strand and substrand.

Spot imbalances where some areas (e.g. model capability) are far ahead of others (e.g. organisational embedding).

Prioritise investments based on which bottlenecks most limit your progress

Track improvement over time with concrete milestones on each dimension

The Road Ahead

The journey to ML maturity in biologics discovery is a systematic climb that requires coordinated progress across technology, data infrastructure and organisational change.

The organisations making the greatest strides are not necessarily those with the most sophisticated models, but those that have built the systems and culture that let ML learn from every experiment and inform every decision.

A useful parallel comes from autonomous vehicles. Progress didn’t hinge on a single breakthrough in modelling. It required simultaneous advances in sensing, compute, data collection and real-time decision systems, all working together before higher levels of autonomy became possible. Increasing the automation of biologics discovery follows a similar pattern: you only unlock step-changes in capability when all three strands mature together.

The framework we’ve outlined here provides a road map for that journey.

Continue the Conversation

We have shared this maturity framework as a conversation starter for the field of biologics discovery. We would welcome your perspective on what ML maturity looks like in practice. How does your organisation approach these challenges? Did we miss any important dimensions? What other real-world examples of ML maturity are worth shouting about? Get in touch.

Recent posts

Subscribe and get new posts and updates from Cradle straight to your inbox.